Ollama is an open-source platform designed for training and deploying custom machine-learning models locally. It enables users to work without relying on cloud services. It supports various model architectures, offering flexibility for diverse applications. Ideal for researchers and developers seeking privacy and control over their data, it facilitates offline AI development and experimentation. (This paragraph was written using the deepseek-r1:32b model running on an Apple Mac Mini M4 Pro).

We will use Ollama as a core tool to experiment with Large Language Models running locally on our LLM Workstation.

Installing and Running the Tool

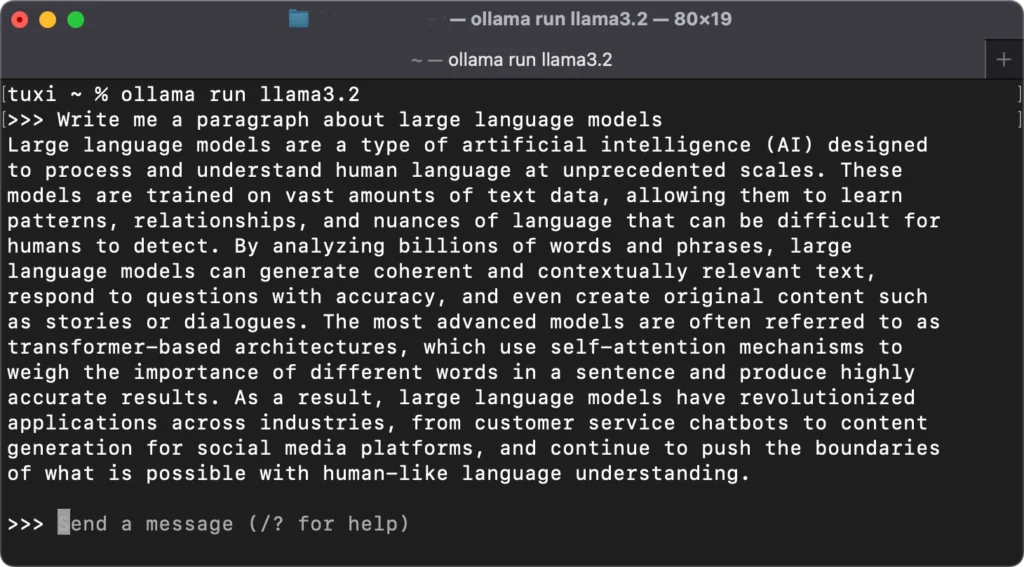

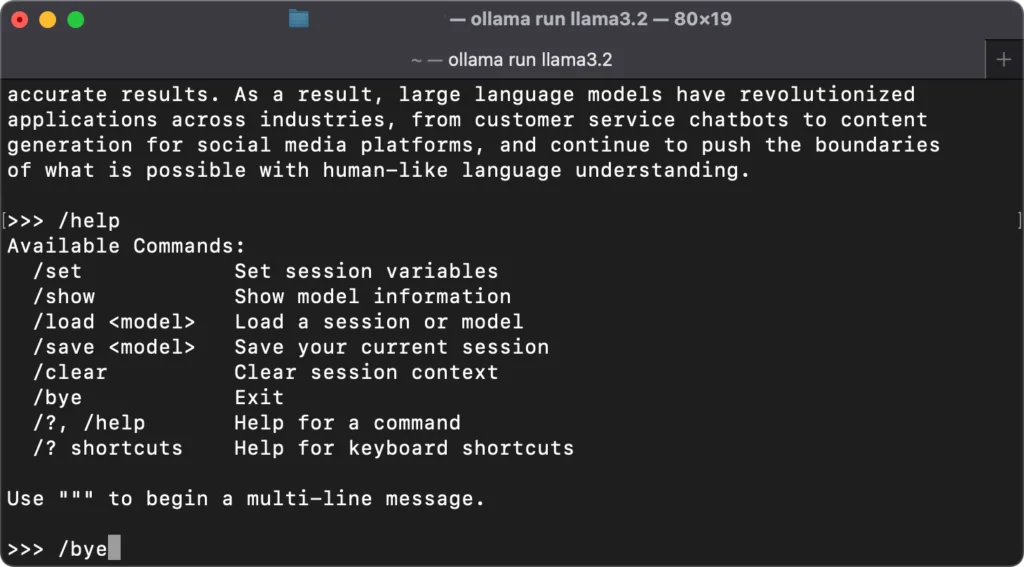

You can install Ollama by downloading and running the installer. Next, you can choose a model (ex. llama3.2) and execute the following command to install and run it.

% ollama run llama3.2

We’ll ask the model to write a paragraph about large language models.

Commands to control the model process are entered by starting with a /. Here is a list of options.

Exposing Ollama on Our Network

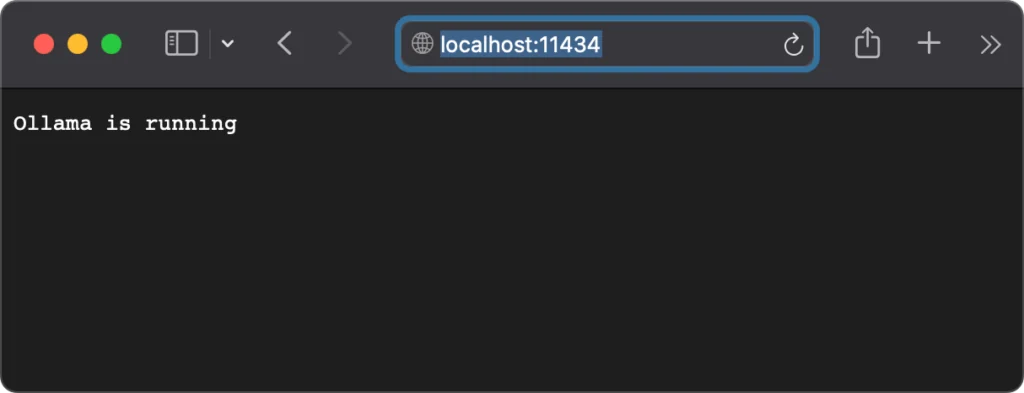

An API that enables applications to interact with or change the running model is available on http://localhost:11434. We want to make the API accessible across our network. We can create a plist to set the OLLAMA_HOST environment variable to “0.0.0.0:11434” to expose the API on our workstation’s IP interface. The list file should be created and saved in ~/Library/LaunchAgents. It is named com.ollama.plist. The plist’s contents are shown below.

<?xml version="1.0" encoding="UTF-8"?>

<!DOCTYPE plist PUBLIC "-//Apple//DTD PLIST 1.0//EN" "http://www.apple.com/DTDs/PropertyList-1.0.dtd">

<plist version="1.0">

<dict>

<key>Label</key>

<string>com.ollama</string>

<key>Program</key>

<string>/Applications/Ollama.app/Contents/MacOS/Ollama</string>

<key>EnvironmentVariables</key>

<dict>

<key>OLLAMA_HOST</key>

<string>0.0.0.0:11434</string>

</dict>

<key>RunAtLoad</key>

<true/>

<key>KeepAlive</key>

<true/>

</dict>

</plist>Finally, make sure that Ollama is stopped and run the following command. Then restart Ollama.

% launchctl load ~/Library/LaunchAgents/com.ollama.plist

You can now access the API anywhere on your network as http://<your workstation IP>:11434.